Although the US leads in developing generative artificial intelligence technology, China has recently enacted regulation. This is to some extent motivated by its desire to maintain stability through internet censorship, but there are also forward-thinking angles to their regulation that could provide insights for European policymakers when developing their own frameworks.

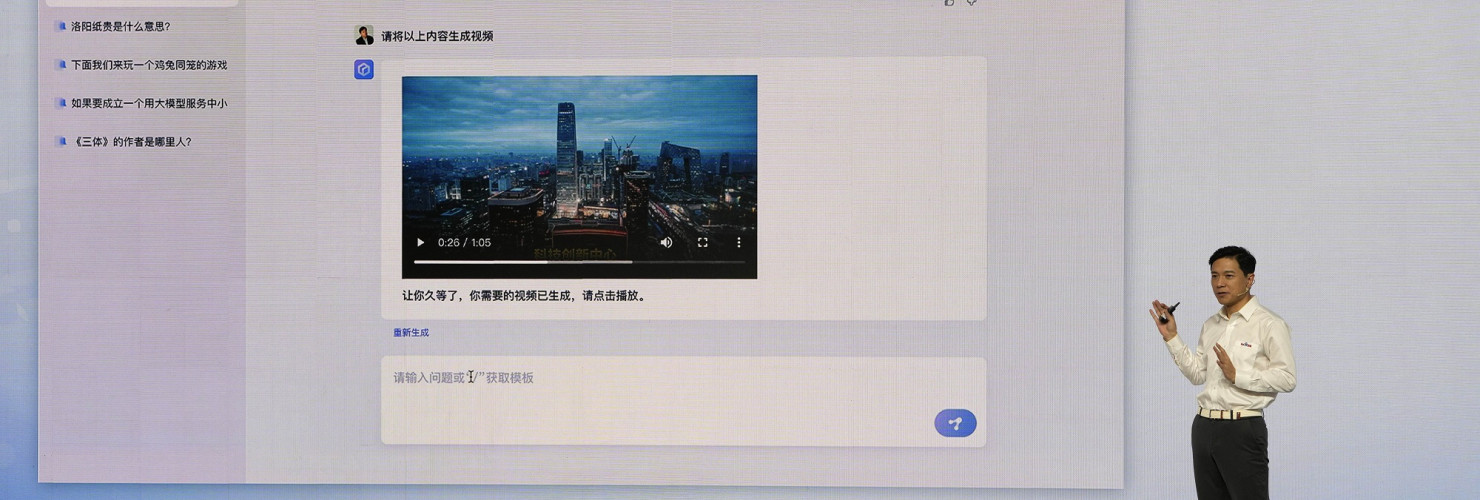

Although the US leads in developing generative artificial intelligence technology, China has recently enacted regulation. This is to some extent motivated by its desire to maintain stability through internet censorship, but there are also forward-thinking angles to their regulation that could provide insights for European policymakers when developing their own frameworks.In April, China’s internet regulator published draft provisions for governing generative artificial intelligence (AI) (生成式人工智能), aiming to ensure “healthy development and standardized application” of AI services that can create text, videos, voice, and images. OpenAI’s ChatGPT is just one of many such technologies swiftly emerging around the globe. Although the US leads in this, the Chinese government has leapfrogged it in creating a regulatory framework. China’s answer to ChatGPT – Baidu’s ERNIE Bot – is barely out of the gate with an underwhelming debut. But a deeper look at the development and commercialization of these technologies in China reveals that the government is actively trying to stay ahead of this growing trend.

This is in large part prompted by the Chinese Communist Party’s (CCP) desire to maintain social and political stability by keeping its internet censorship mechanisms intact. However, the ability to roll out broad restrictions unchallenged also allows Beijing to move fast. Information controls aside, China’s forward-thinking approach to regulating the input and output of large language models (LLMs) may still lend European lawmakers interesting angles when developing their own regulatory framework.

Generative AI has seen explosive growth in the last few years, showing its transformative potential for economies and societies but also raising massive governance challenges. ChatGPT and its peers have demonstrated stunning ability to comprehend and compose text, which will only improve with time. Image generators like Midjourney can generate original artwork based on text prompts. Advances in virtual and augmented reality technology to create immersive simulations are transforming fields from gaming to healthcare. Alongside these breakthroughs, generative AI already permeates social media in the form of photo- and video-enhancing filters. With these technologies have come a range of privacy, security, ethical, and socioeconomic concerns.

Widespread adoption for commercial use

In China, ChatGPT was promptly banned by government censors, and companies have been scrambling to develop domestic alternatives. But generative AI has already seen widespread adoption for commercial use. A report by Tsinghua University’s Institute for AI cites some of these, including TV programs that showcase the ability of voice generation and poem-writing programs. In one case, generative AI was used to save previously shot film footage, replacing the faces of actors who had run afoul of the state’s moral guidelines. China’s official state news agency Xinhua regularly uses a simulated “AI anchor” in a portion of its online news segments. In a white paper released in 2020, Tencent stressed the beneficial potential of AI-generated content in areas from e-commerce to healthcare.

With widespread adoption soon came controversy. In 2019, a mobile app called ZAO that allows users to swap their faces into short movie clips became wildly popular overnight. It was ordered off the shelves almost as quickly when regulators found it to violate user privacy and data rights. Generative AI, especially involving machine learning-altered videos known as deepfakes, enables practices of a much more nefarious nature. In 2021, Chinese authorities found that criminals had created AI-generated facial videos from scraped internet photos to illegally sign up for online payment accounts. China is not alone in facing such threats: In 2022, the mayors of several European cities were spoofed in video calls by someone using altered video footage to impersonate the mayor of Kyiv.

Regulators everywhere struggle to stay ahead of the tech

While the stories of generative AI misuse are similar, governments’ reactions have differed. ChatGPT caught the drafters of the European Union’s AI Act off guard, showing that lawmakers are struggling with general-purpose AI systems that simultaneously enable innocuous and highly harmful applications. Beijing, meanwhile, took early steps. In response to the ZAO incident, the CAC ordered online information service providers to review and clearly label any AI-generated content. The rules also outlawed the use of generative AI to produce and spread fake news. Building on these earlier provisions, in January this year, China began enforcing legislation regulating the use of “deep synthesis” (深度合成), a term used earlier for generative AI.

China’s governance efforts more broadly direct attention toward the wider societal impacts of generative models. In addition to labelling requirements, the deep synthesis regulations already included other provisions such as requiring service providers to dispel rumors and false information, submit their algorithms for review via the CAC-run filing system, and ensure that the data used to train their AI models has been lawfully obtained. The April draft rules go even further, requiring all new products to undergo a security assessment with the CAC before launching.

In addition to crafting regulation, the government has also backed startups and projects specializing in deepfake detection, like Tsinghua University’s spinoff RealAI (the EU is backing similar efforts). Mindful of the security risks associated with the spread of AI-generated content, the top think tank of the Ministry of Industry and Information Technology published China’s first-ever industry standard for the evaluation of generative AI products in March.

Beijing’s approach reflects its forward-thinking stance toward AI’s penetration into society, but also an ability to move quickly on regulation unthinkable in countries governed by rule of law. Chinese authorities are likely to embrace the deployment of large language models in useful sectors like autonomous driving or healthcare, but are also concerned about the technology’s potential to mobilize public opinion, thus endangering state security. Underpinning China’s regulatory efforts is also a desire to maintain social and political control. For example, the draft rules stress that AI-generated content must “reflect core socialist values and … not contain content that subverts state power.”

CCP obsession with information control could bring tighter rules

It remains to be seen how much longer Chinese AI will thrive, as the CCP is so obsessed with information control. When it comes to the internet and digital technologies, China’s success has often been attributed to regulators’ initial tolerance of experimentation. With LLMs, Beijing might just need to live with the risk that chatbots return politically sensitive information to users. Democracies may be slower at enforcing regulation, but they could ultimately create a more enabling environment for the transformative potential of AI.

Nevertheless, lawmakers in Europe should reflect on China’s regulatory efforts beyond the overbroad authority it gives itself to curtail free expression. The draft regulation places significant responsibility on providers of generative AI services, including the labeling of generated content and the protection of personal data. In gathering data for training large AI models, providers must guarantee accuracy, avoid bias, and respect intellectual property rights. They are also responsible for the output of their models – they cannot produce content that discriminates based on users’ race, gender, religion, or other characteristics.

While the feasibility of enforcement is up in the air, some of these requirements could provide food for thought as policymakers consider how this powerful new technology should be regulated in liberal democratic societies.

No comments:

Post a Comment